Disinformation and the war

Tsunami of deliberately false narratives

Welcome. I’m glad you’re here.

This newsletter covers what the World Economic Forum calls one of the world’s biggest problems. Disinformation — deliberately manufactured and distributed lies with the goal of fooling you for the financial, political or personal benefit of someone else — is much more than about politics. Its tentacles seep into every nook and cranny of our society. You are bombarded with Disinformation every day — it can impact your money, your job, your belief system — and you might not even know it. And it’s getting worse.

I’m Paul Brandus, an independent, Washington, D.C.-based journalist. I work for no one, am intimidated by no one, and cover this issue with neither fear nor favor. Here’s my LinkedIn bio if you want to know more. I hope you’ll support my work by subscribing — and sharing this with others. Thank you.

Now the latest.

U.S./Israeli attack on Iran unleashes tsunami of Disinformation (and see next two items for additional context)

Perhaps you’ve heard the phrase “the fog of war.” It refers to the uncertainty and confusion experienced during a military conflict, when information is often incomplete or misleading. The phrase is believed to have originated with Prussian military theorist Carl von Clausewitz (1780–1831).

Such uncertainty and confusion can only be heightened in this age of artificial intelligence and social media, a potent and, if abused, malicious combination.

Since it began Friday-into-Saturday Washington time, the surprise American-Israeli attack on Iran has unleashed a torrent of such fog. As Broderick McDonald of Oxford University and King’s College London writes, the war “has already spiraled into a flood of AI-generated disinformation. Deepfakes & AI bot networks have made it the most polluted information space we’ve ever seen in armed conflict.”

I’ll return McDonald—whose team is just out with a 40-page report explaining why this is now worse than ever—in a minute. But first, a few examples of Disinformation from the past few days:

1. Spoofed Military Directives

Claim: A viral message began circulating on March 1, 2026, purportedly from U.S. Cyber Command instructed all U.S. service members to immediately disable location services and uninstall specific commercial apps like Uber and Snapchat, claiming they had been compromised by Iranian intelligence.

Reality: U.S. Central Command (CENTCOM) confirmed the message was entirely false. It appeared to be a psychological operation aimed at disrupting military communication and creating friction between the DOD and private tech companies.

2. AI-Generated “Battlefield” Evidence

Within hours of the first strikes, social media platforms were inundated with high-definition “footage” of the aftermath.

Claim: The “Sink the Lincoln” Hoax: AI-generated images began trending that showed the USS Abraham Lincoln aircraft carrier on fire and sinking in the Persian Gulf.

Reality: CENTCOM was forced to post real-time verification from the carrier deck on X to prove the vessel was undamaged and still launching sorties.

Claim: Fact-checkers (including Full Fact and PolitiFact) identified multiple video compilations of “Iranian base explosions.”

Reality: the videos contained classic AI artifacts: warped doorframes, 2D overlays that didn’t move with the camera, and “frozen” background characters who failed to react to the blasts.

3. Decontextualized “Ghost” Footage

This remains the most prolific tactic due to its speed.

Claim: A massive explosion video posted on March 1 claimed to be a direct Iranian retaliatory hit on Tel Aviv.

Reality: It was archival footage of the 2015 chemical warehouse explosion in Tianjin, China.

4. “Regime Change” Content

Claim: Deepfake news anchors aired reports claiming that Evin Prison had been liberated or that the regime had already collapsed.

Reality: It hasn’t collapsed.

5. Other

Claim: Muslim politicians in the West have mourned the death of Ayatollah Khamenei.

A BBC “Verify Journalist,: Lucy Gilder writes that “Several posts claiming to show two UK MPs participating in a one-minute silence to mark the death of Iran’s Supreme Leader Ayatollah Ali Khamenei have drawn millions of views on X.”

Reality: Gilder notes that the phony posts used photos of the MPs taken before Khamenei was killed at the weekend and there’s no evidence either politician did this.

Claim: Another shows UK Home Secretary Shabana Mahmood wearing a headscarf while talking to a group of Muslim men. The text says she “joined her constituents to hold a one-minute silence for Ayatollah Khamenei today in Birmingham”.

Reality: Gilder says that “A simple reverse search of this image using Google shows it was taken in September 2024 when Mahmood visited Southport Mosque following riots that summer. She posted about the visit on her own website, external.

I’m barely scratching the surface here. The question: Why do so

#####

“The most polluted information space we’ve ever seen in armed conflict.”

More now from Oxford’s McDonald and his early look at the accelerating pace and sophistry of Disinformation, as the war in the Middle East continues to unfold. Again, he says it has “has already spiraled into a flood of AI-generated disinformation. Deepfakes & AI bot networks have made it the most polluted information space we've ever seen in armed conflict.”

The report from McDonald and his team is he claims, “the first report of its kind to systematically analyse how AI information threats — deepfakes, automated bot network, chatbot hallucinations — have contributed to sowing confusion and real-world violence around elections, terror attacks, riots, & armed conflicts.” Key points below:

From the Charlie Kirk Assassination, to the UK's Southport riots, to the Israel-Iran War, our study dissects how AI tools have been weaponised following major security incidents the past several years.

It is becoming alarmingly clear that AI Information Threats are now a fixture of major elections, terror attacks, armed conflicts, and civil demonstrations.

Threat actors can now produce & disseminate false & inflammatory narratives rapidly & at an industrial scale, pouring fuel on the fire of security events.

Perhaps even more worryingly, there is a great deal of uncertainty over whether the UK and other countries are prepared to deal with these risks.

But it’s not all doom and gloom. McDonald adds that his team has also listed several innovative ways that AI tools can help to strengthen crisis response measures.

Key recommendations include:

Establishing clear crisis response protocols for government departments on AI information threats that pose serious risks to public safety.

Releasing guidance for the public on the fact-checking limitations of AI chatbots, particularly during real-time events.

Creating new knowledge sharing channels in tech forums for AI companies to circulate threat intelligence on serious model vulnerabilities and corresponding solutions during live crises.

Encouraging social media platforms to update their Terms of Service to include new policies which prevent unverified content being displayed in users’ feeds during ongoing incidents to restrict the potential spread of misinformation.

#####

Another example of how your brain has “no delete” button

Disinformation always has a head start on the truth. A lie is manufactured and shared around the world with push button ease. The truth is then forced to play catchup. The problem? It rarely catches up. The lie has first mover advantage and seeps into the mind, and once it’s there is hard to dislodge. The brain, after all, lacks a delete button.

Pizzagate — remember that? — is a great example. A decade ago, a conspiracy theory emerged to the effect that a pedophilia ring linked to members of the Democratic Party was operating in the basement of a pizza restaurant in Washington, D.C. And that it was linked to the election campaign of Hillary Clinton. As weird conspiracies go, it’s hard to top that.

Some people will believe anything, including a North Carolina man who drove to Washington to investigate the conspiracy and fired a rifle inside the restaurant to break the lock on a door to a storage room during his search. In addition, the restaurant's owner and staff received death threats from conspiracy theorists.

Of course it was all rubbish, a deliberately malicious claim that has been — do we even need to say it again? (I suppose we must) — shown to be completely bonkers.

And yet, a decade later, it came up again, this time during the recent testimony of Clinton as part of a House committee’s investigation into the life and connections of the late child sex offender Jeffrey Epstein.

It’s another example of something I often discuss — namely that your brain has no delete button. Once it absorbs something, it will remain there — regardless of whether it is contradicted by subsequent information. One of the proponents of this theory is Dr. Stephen Lewandowsky of England’s Bristol University, with whom I spoke for a recent podcast:

Lewandowsky:

Whenever people are encountering information, they tend to believe it by default. Now, that makes a lot of sense, because 90% of the time when I’m interacting with the world and I talk to people outside, they’re going to tell me the truth. If I ask you what time it is, everybody will tell me the truth. So it’s a very sensible thing for our system to be built that way, that by default we accept everything as being true.

Now, the problem with that though is what happens if something turns out to have been false? Maybe somebody, by mistake, told me it was Tuesday rather than Monday when I asked them what day of the week it is. Then I have to update my memory and my mental model of the world. And the question then is, how do you do that?

Well, and that’s where things become very interesting, complicated, and also concerning when it comes to the welfare of our societies. And that is that in order to unbelief something, people have to identify it as being false. You can’t just rem

ove things from memory. Once you have encoded information in memory that you believe, you can’t just yank it out. Memory doesn’t work that way. There’s no delete button.

I use the word “unbelieving” just to characterize what it really is we have to achieve as human beings when somebody tells me that something I believe is wrong. And that turns out to be very difficult, because there’s no delete button in my brain, I can’t just say, oh, delete this piece of information. It can’t be done.

This, by the way, helps explain why fact-checking, a frequent tool of journalists, is often ineffective. They can point out a deliberate lie uttered by someone else and add the correct facts; but the audience for that original lie may have trouble “deleting” it from their mind— even if they wanted to.

#####

Russia’s information warfare targets Ukraine’s European allies

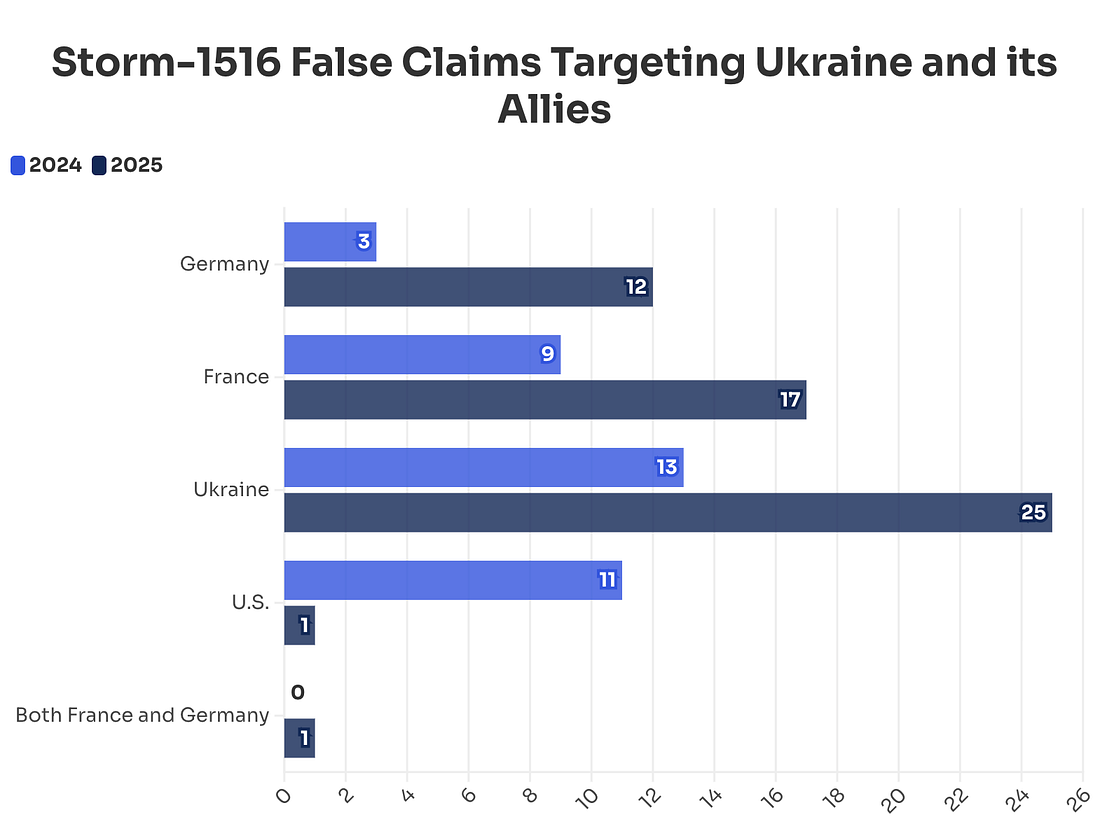

NewsGuard reports: “Russian influence operation has shifted its strategy over the past year not only to spread false claims about Ukraine, but increasingly to aim disparaging narratives at French President Emmanuel Macron and German Chancellor Friedrich Merz. It’s an apparent effort to discredit two of Ukraine’s staunchest allies as they move in to fill the near-total reduction in U.S. financial support for Ukraine.”

It says:

The influence operation appears to have evolved as Europe replaced the U.S. as Ukraine’s biggest benefactor after President Donald Trump assumed office in 2025. Total U.S. aid to Ukraine fell by 99 percent during the year, according to the Kiel Institute for the World Economy, a German think tank. During this same period, European military aid increased by 67 percent, compared to the 2022-2024 average, the Kiel Institute reported.

Germany is now Ukraine’s biggest military aid donor — 9 billion euros in 2025 — and France’s Macron has emerged as a vocal champion of Ukrainian president Volodymyr Zelensky, advocating for sending European troops to Ukraine to support peacekeeping efforts.

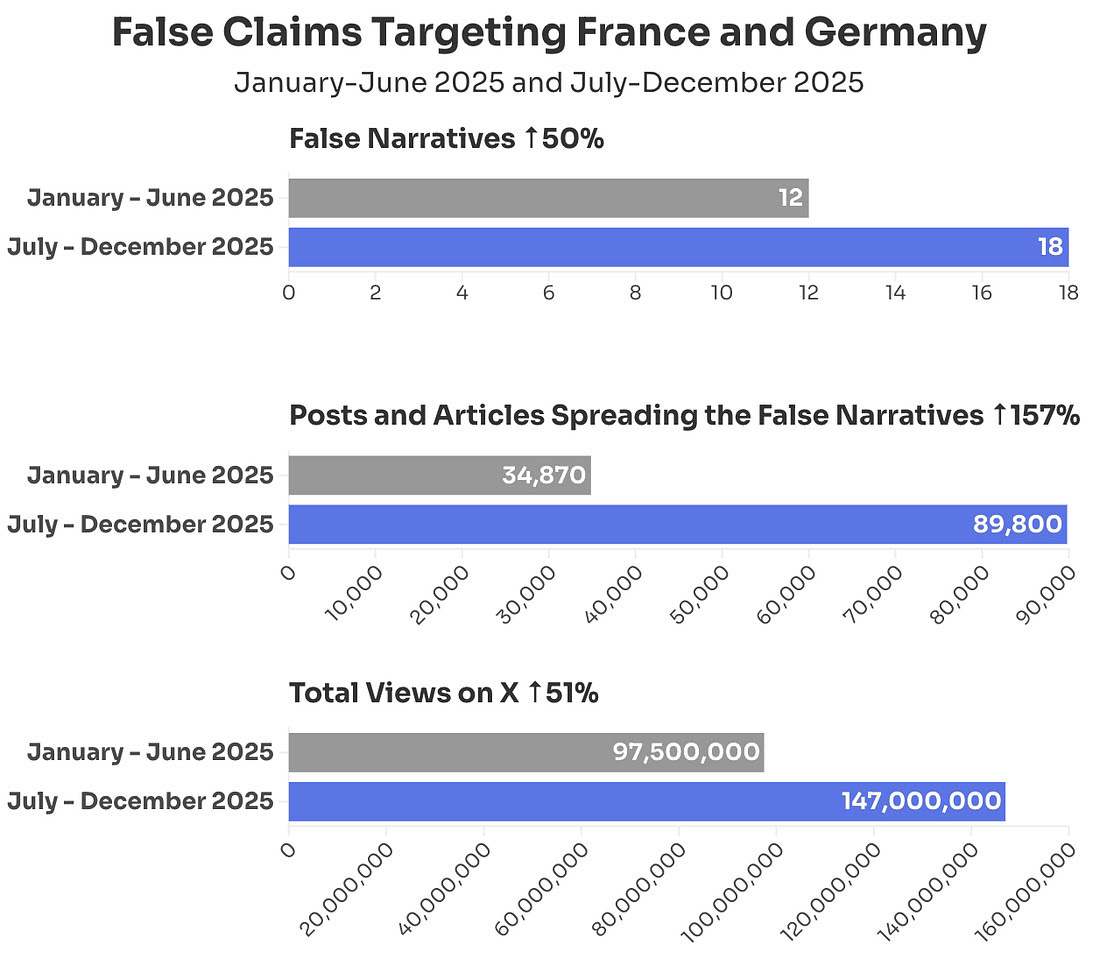

This compared to 12 claims totaling 97 million views in the first half of the year.

(The numbers in the chart above differ from those described at the introduction to this report because they account only for 2025, and do not include false claims from the first two months of 2026.)

Upcoming conferences and events

European Cybersecurity Skills Conference (March 2026): While focused on cybersecurity, this event in various European locations often covers the intersection of cyber threats and disinformation campaigns.

Voices Festival (March 10–12, 2026 | Florence, Italy): A festival dedicated to journalism and media freedom, specifically looking at the challenges journalists face in a climate of misinformation.

Cambridge Disinformation Summit (April 8–10, 2026 | Cambridge, UK): Hosted by the University of Cambridge, this summit explores systemic risks from AI and social media platforms. Registration is open until March 12.

Engaging with Conspiracy Theories, Fostering Democracy (April 9–10, 2026 | Prague, Czech Republic): A targeted academic conference at Charles University focusing on the drivers of conspiracy theories and disinformation studies.

RightsCon 2026 (May 5–8, 2026 | Lusaka, Zambia & Online): The 14th edition of this global summit on human rights in the digital age, with significant tracks dedicated to platform governance and disinformation.

IAIA26: Misinformation, Disinformation, Communication, & Impact Assessment (May 19–22, 2026 | Québec City, Canada): A unique conference focusing on how disinformation impacts public trust in scientific facts and environmental assessments.

Summer & Autumn 2026

Disinformation Summer Institute (June 2026 | Seattle, WA): A 4-day retreat at IslandWood (Bainbridge Island) focused on deep-dive research and collaboration.

Summer School on Misinformation, Disinformation, and Hate Speech (July 6–10, 2026 | Rome, Italy & Online): Organized by UNICRI (United Nations), this hybrid program provides tools to detect and debunk disinformation and understand its role in war and propaganda.

#Disinfo2026: EU DisinfoLab Annual Conference (October 6–8, 2026 | Vilnius, Lithuania): One of the largest annual gatherings for the counter-disinformation community, focusing on OSINT investigations and concrete solutions.

#####

Need a speaker for your event?

I speak to groups on the subject of Mis/Disinformation and can either speak or host a roundtable, etc. at yours. Here’s what you can expect:

Key Themes: The Disinformation Threat Landscape

I’ll discuss these five core pillars that categorize the current threat environment:

The Weaponization of Valuation: False narratives—such as fabricated earnings leaks or “deepfaked” regulatory investigations—can trigger algorithmic trading and wipe out billions in market capitalization in minutes.

Executive Attack Surfaces: Leaders are now primary targets. Deepfake audio of a CEO or “leaked” private memos are used to manipulate internal culture, derail mergers, or facilitate fraudulent wire transfers.

Operational Sabotage: Coordinated campaigns can target supply chains or physical infrastructure (e.g., conspiracy theories leading to attacks on telecom towers or boycotts based on false labor practice claims).

The AI Acceleration Force: Generative AI allows adversaries to create high-fidelity, hyper-personalized “evidence” at scale, making traditional detection methods (like checking for typos) obsolete.

Narrative Hijacking: Adversaries exploit “moments of uncertainty”—such as earnings calls, leadership transitions, or geopolitical crises—to inject narratives that force the company into a reactive, defensive posture.

Expected Learning Outcomes

By the end of the briefing, you should have a good grounding in how to transition from passive awareness to active resilience. You’ll learn:

Shift from “Truth” to “Verification:” You’ll learn that being “right” isn’t enough. In a crisis, the winner is often whoever reaches the “speed-to-truth” first. You’ll have a verification framework that prioritizes rapid internal fact-checking over emotional reaction.

Recognition of “Innoculation” Strategies: You’ll understand the concept of Prebunking—proactively educating employees and stakeholders about potential false narratives before they encounter them. This “mental vaccine” reduces the likelihood of internal panic or accidental amplification.

Cross-Functional Governance: Disinformation is not solely an IT or PR problem. You’ll walk away with a template for a Disinformation Response Team that includes:

Legal: For regulatory and litigation risks.

Finance: To monitor market sentiment and volatility.

HR: To manage internal morale and employee-led amplification.

Security/CISO: To detect deepfakes and social engineering.

Measurable Risk Metrics: You’ll be able to track tangible metrics:

Time-to-Detection: How long did it take to find the narrative?

Rumor Longevity: How long did the false claim stay active before being neutralized?

Incident Recurrence: Are the same actors attacking through different channels?

Remember: Disinformation does not need to be believed to be effective; it only needs to create enough doubt to paralyze decision-making.

Quote of the Week

I’m re-reading a short but magnificent book - “On Disinformation” - by Lee McIntyre, who writes:

“Neither objectivity nor neutrality require one to feign indifference between the truth and the lie; to refuse to stand up for the truth because it might look partisan is itself to succumb to partisanship.”

#####

I want to hear from you

Information integrity is such a huge and vital topic. I want to hear what you think.

Here’s my email: DisinformationWire@yahoo.com. Tell me your stories, share your thoughts, send me tips, questions and ideas. I can’t promise that I’ll be able to respond to every email, but I do read each and every one. If you are not a subscriber, I hope you’ll become one. Paid subscribers get my same-day personal response. I’m very appreciative of your interest in this important subject. Thank you.

Paul Brandus Washington / Mar. 4, 2026