A 15-second deepfake sends shivers through Hollywood

Why corporations and politicians should also be afraid

Welcome. I’m glad you’re here.

This newsletter covers what the World Economic Forum calls one of the world’s biggest problems. Disinformation — deliberately manufactured and distributed lies with the goal of fooling you for the financial, political or personal benefit of someone else — is much more than about politics. Its tentacles seep into every nook and cranny of our society. You are bombarded with Disinformation every day — it can impact your money, your job, your belief system — and you might not even know it. And it’s getting worse.

I’m Paul Brandus, an independent, Washington, D.C.-based journalist. I work for no one, am intimidated by no one, and cover this issue with neither fear nor favor. Here’s my LinkedIn bio if you want to know more. I hope you’ll support my work by subscribing — and sharing this with others. Thank you.

Now the latest.

If you can make this deepfake video in ten seconds, imagine what those with bad intentions could do

Have you seen this deepfake video of ‘Tom Cruise’ fighting “Brad Pitt’? Irish filmmaker Ruairi Robinson created it in seconds — with two-line prompt in Seedance 2 (owned by ByteDance). Pretty damn realistic — and again, done in seconds.

Hollywood is in an uproar. “In a single day, the Chinese AI service Seedance 2.0 has engaged in unauthorized use of U.S. copyrighted works on a massive scale,” Charles Rivkin, chairman and CEO of the Motion Picture Association said in a statement. “By launching a service that operates without meaningful safeguards against infringement, ByteDance is disregarding well-established copyright law that protects the rights of creators and underpins millions of American jobs. ByteDance should immediately cease its infringing activity.”

Obviously other industries should be alarmed as well. As you know, there’s nothing stopping someone with bad intentions from creating and distributing deepfake videos of politicians, CEOs etc. saying and doing things which could be harmful to national security, create turmoil in financial markets, sow further doubt in American democracy and more.

The technology and means to do this are getting better daily. I think, for example, that things have zoomed well beyond the "Too Many Fingers" dynamic. What’s that? Basically, deepfakes are now using "adaptive skills" that make them nearly indistinguishable from reality without deep technical analysis.

Back to the phony ‘Cruise-Pitt’ smackdown.

On Friday, the actors’ union SAG-AFTRA issued this statement about Bytedance: “The infringement includes the unauthorized use of our members’ voices and likenesses. This is unacceptable and undercuts the ability of human talent to earn a livelihood. Seedance 2.0 disregards law, ethics, industry standards and basic principles of consent. Responsible A.I. development demands responsibility, and that is nonexistent here.”

That’s the problem. In this Wild West deepfake era, the ease and anonymity with which anyone can produce anything, when and how will at-risk parties — governments, corporations, consumers — demand responsibility and accountability? How and when will there be enforceable standards of behavior? What would those mechanisms look like? What penalties — civil or criminal — could be deployed to make malicious players think twice? What do you think?

#####

Old-school scams get a new lease on life - thanks to crypto-fueled Disinformation

I can’t stress enough: If you think Disinformation is only to be found in the political realm, well, you’re wrong. Disinformation — manufactured and distributed lies with the goal of fooling you for the financial, political or personal benefit of someone else — is everywhere.

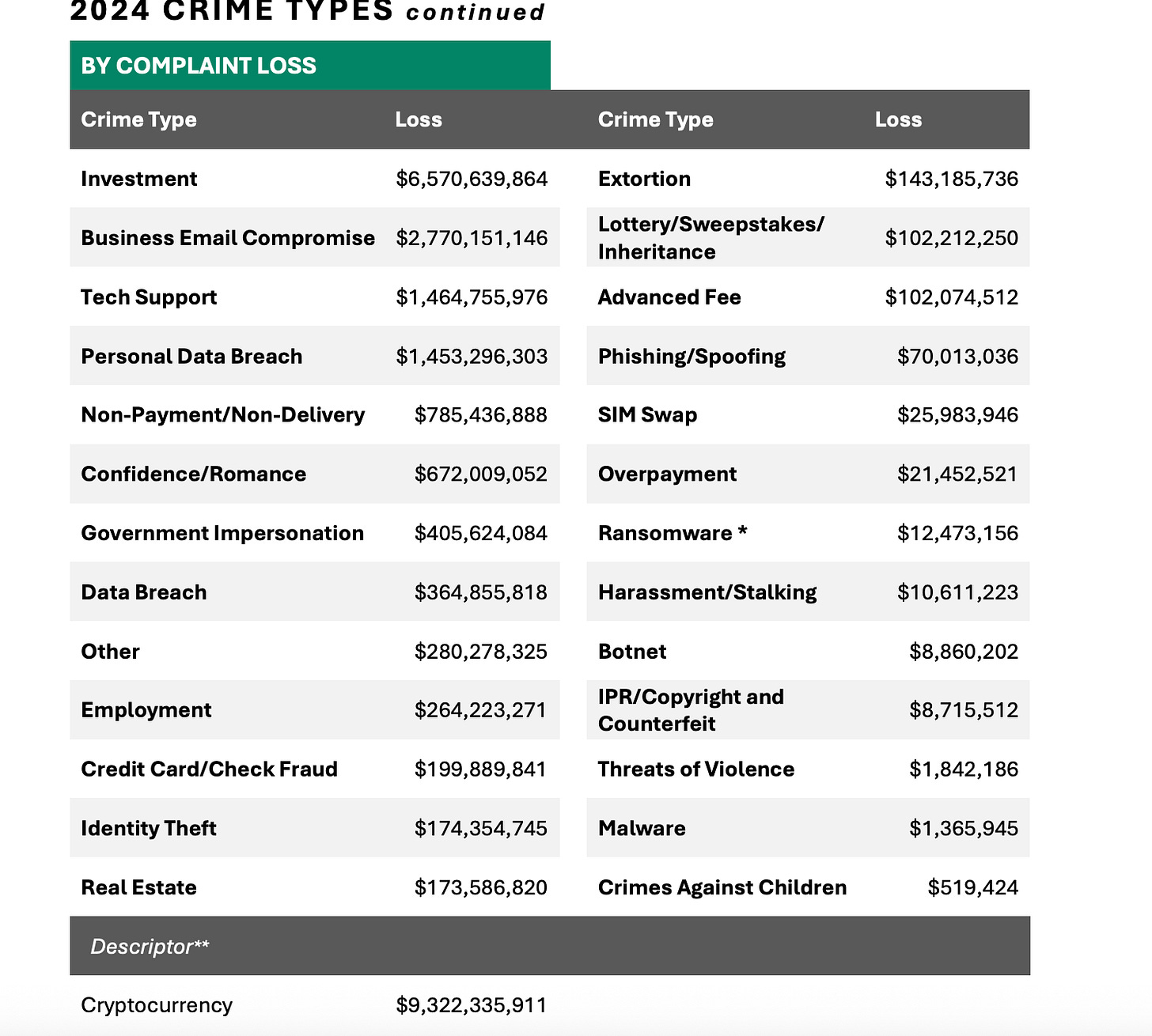

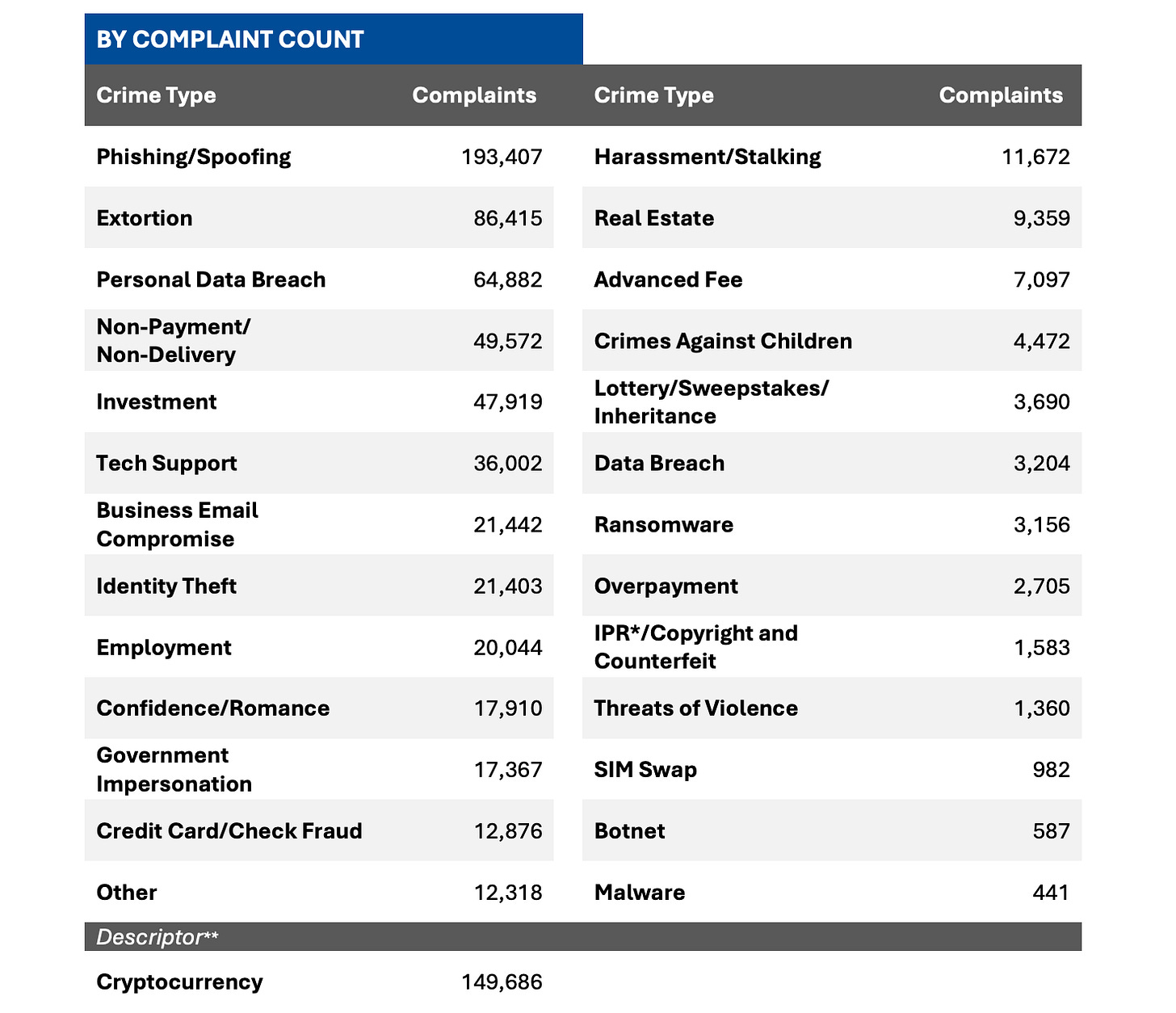

New data from the FBI bears this out. Fraud against Americans hit $16.6 billion in 2024. a jump of 33% from the year before. And the biggest piece of this? Crypto-related scams, which accounted for $9.3 billion, a 66% increase.

The true numbers are almost certainly bigger, given that many scams go unreported. As you can see from the FBI chart below, Disinformation scams come in all shapes and sizes:

You might think that “this could never happen to me,” but these kinds of scams are so sophisticated and so ubiquitous that no one is truly immune.

Trust is one reason why so many of us fall for scams. Fortune magazine writes of:

“a broad bucket that includes long-term fraud schemes that rely on building trust with victims and include what experts call “bromance” scams involving friends who claim to be helping their professional circle invest successfully, have grown into a flourishing vector to broader crypto and investment scams. These include ponzi-like schemes, crypto frauds, and money muling, in which a victim unwittingly accepts funds that have been coerced and pooled from other victims before shuttling them into other accounts, laundering the money for crime rings typically located in West Africa and Southeast Asia, although fraud can start anywhere around the globe. The targets are often closely researched and tracked beforehand and the schemes themselves have been honed to an incredibly successful point.”

Again, nothing like this could ever happen to you - right?

Next week I’ll discuss how victims are selected by fraudsters, their “pitch,” and what you can do to protect yourself.

#####

Can Large Language Models be “inoculated’ against Mis/Disinformation?

I’ve mentioned previously that LLMs, a relatively new part of our media and culture, can be breeding grounds for false data and information. After all, LLMs simply scrape up what’s available on the internet and pass it on to you. But like the internet, the GIGO axiom - Garbage In, Garbage Out - can apply.

This “global spread of health misinformation is endangering public health, from false information about vaccinations to the peddling of unproven and potentially dangerous cancer treatments,” write Sander van der Linden and Yara Kyrychenko of Cambridge University. They add:

The widespread use of large language models (LLMs) across professional settings and domains is raising important questions about not only their usefulness but also their potential as a vector for the spread of health misinformation. Indeed, the potential susceptibility of LLMs to accept and produce harmful misinformation is especially high stakes in the context of medicine.

They write that researcher Dr. Mahmud Omar (who studies the intersection of artificial intelligence and health at New York’s Mount Sinai Health System - he’s also a doctor at Maccabi Healthcare Services in Tel-Aviv) studied 20 LLMs with an estimated three to four million prompts to quantify their susceptibility to misinformation. They write:

Importantly, falsehoods presented in authoritative and clinical prose in the discharge notes were more likely to bypass built-in guardrails than informal social media talk. For example, more than half of the models accepted the false claim that drinking cold milk soothes oesophagitis-related bleeding, and at least three models accepted social media claims that mammography causes breast cancer. Contrary to expectation, prompts with injected logical fallacies mostly decreased model susceptibility to misinformation, indicating that especially larger LLMs have likely received safety finetuning with markers of unreliable rhetoric.

Can LLMs be “inoculated” against misleading or deliberately false information? van der Linden and Kyrychenko offer say techniques can be used to “refute misinformation in advance,” thus making LLMs “less likely to accept and produce misinformation in the future.” They offer three broad ways to inoculate LLMs:

✅ *Misinformation fine-tuning* (e.g., small quarantined set of explicitly labelled falsehoods).

✅ *Inoculation constitution* (e.g., encoding guardrails that teach the model about general manipulation techniques).

✅ *Inoculation prompting* (e.g., explicitly asking a model to act in misaligned ways in controlled settings so that it learns to discern between misinformation and accurate content.)

#####

How generative AI can increase extremist attitudes and support for violence

A recent paper in Science warns that swarms of collaborative, malicious AI agents could pose a serious threat to democracy by manipulating beliefs at a population-wide scale. Excerpts:

In our latest publication, we build on this conversation by outlining a psychological pathway through which generative AI may subtly polarize society, increasing perceived justification of political violence, and even heightening individuals’ willingness to personally fight for their political beliefs.In two experiments (N =951) with participants across the US, we examined the effect of short AI-generated messages framing liberal and conservative political issues in terms of universal moral concerns such as rights, fairness, and welfare.

We found that AI messages appealing to rights and fairness can increase people’s justification of political violence and even their willingness to personally fight for their political beliefs. The effect of moral framings on willingness to fight was mediated by increased perceptions of political issues as absolute moral obligations — also known as sacred values.Our findings shed light on the ways AI swarms can subtly polarize society, revealing a psychological pathway through which generative AI can increase extremist attitudes and support for violence.

This research was done by two Spanish PhD students, Rosamunde Hendricks and Helena Gil Buitrago for leading this important and timely work. More here.

#####

Independent journalism — a bulwark of democracy — continues to grow

You may have heard that the Washington Post, now a shadow of the journalistic titan it so recently was — recently shed about a third of its staff. Three former Posties have come up with a great idea. Justin Bank, Ryan Y. Kellett and Liz Kelly Nelson, have launched The Independent Journalism Atlas – a sortable and searchable prototype database of 1,001 creators (and counting), which they see as the IMDb or Wikipedia of independent journalism.

Their mantra: “Building Infrastructure for the Future of Journalism.”

Their reasoning: Each and every day, thousands of individual creators are committing extraordinary acts of journalism across social, email and web. But how do you find them? With so many independent creators creating so much good stuff to read, watch and listen to on the internet, how can we keep track across platforms and through the algorithms designed to keep us scrolling infinitely?

”We are creating the essential systems that support creator journalism: discovery, standards, and fair partnerships for a more open and resilient media future,” the trio says.

Let’s wish them well. Good, independent journalism, journalism that shines a light into dark places, which comforts the afflicted and afflicts the comfortable — is essential for American democracy.

#####

Here are the key upcoming conferences and events concerning disinformation for the remainder of 2026:

Spring 2026

European Cybersecurity Skills Conference (March 2026): While focused on cybersecurity, this event in various European locations often covers the intersection of cyber threats and disinformation campaigns.

Voices Festival (March 10–12, 2026 | Florence, Italy): A festival dedicated to journalism and media freedom, specifically looking at the challenges journalists face in a climate of misinformation.

Cambridge Disinformation Summit (April 8–10, 2026 | Cambridge, UK): Hosted by the University of Cambridge, this summit explores systemic risks from AI and social media platforms. Registration is open until March 12.

Engaging with Conspiracy Theories, Fostering Democracy (April 9–10, 2026 | Prague, Czech Republic): A targeted academic conference at Charles University focusing on the drivers of conspiracy theories and disinformation studies.

RightsCon 2026 (May 5–8, 2026 | Lusaka, Zambia & Online): The 14th edition of this global summit on human rights in the digital age, with significant tracks dedicated to platform governance and disinformation.

IAIA26: Misinformation, Disinformation, Communication, & Impact Assessment (May 19–22, 2026 | Québec City, Canada): A unique conference focusing on how disinformation impacts public trust in scientific facts and environmental assessments.

Summer & Autumn 2026

Disinformation Summer Institute (June 2026 | Seattle, WA): A 4-day retreat at IslandWood (Bainbridge Island) focused on deep-dive research and collaboration.

Summer School on Misinformation, Disinformation, and Hate Speech (July 6–10, 2026 | Rome, Italy & Online): Organized by UNICRI (United Nations), this hybrid program provides tools to detect and debunk disinformation and understand its role in war and propaganda.

#Disinfo2026: EU DisinfoLab Annual Conference (October 6–8, 2026 | Vilnius, Lithuania): One of the largest annual gatherings for the counter-disinformation community, focusing on OSINT investigations and concrete solutions.

#####

Need a speaker for your event?

I speak to groups on the subject of Mis/Disinformation and can either speak or host a roundtable, etc. at yours. Here’s what you can expect:

Key Themes: The Disinformation Threat Landscape

I’ll discuss these five core pillars that categorize the current threat environment:

The Weaponization of Valuation: False narratives—such as fabricated earnings leaks or “deepfaked” regulatory investigations—can trigger algorithmic trading and wipe out billions in market capitalization in minutes.

Executive Attack Surfaces: Leaders are now primary targets. Deepfake audio of a CEO or “leaked” private memos are used to manipulate internal culture, derail mergers, or facilitate fraudulent wire transfers.

Operational Sabotage: Coordinated campaigns can target supply chains or physical infrastructure (e.g., conspiracy theories leading to attacks on telecom towers or boycotts based on false labor practice claims).

The AI Acceleration Force: Generative AI allows adversaries to create high-fidelity, hyper-personalized “evidence” at scale, making traditional detection methods (like checking for typos) obsolete.

Narrative Hijacking: Adversaries exploit “moments of uncertainty”—such as earnings calls, leadership transitions, or geopolitical crises—to inject narratives that force the company into a reactive, defensive posture.

Expected Learning Outcomes

By the end of the briefing, you should have a good grounding in how to transition from passive awareness to active resilience. You’ll learn:

Shift from “Truth” to “Verification:” You’ll learn that being “right” isn’t enough. In a crisis, the winner is often whoever reaches the “speed-to-truth” first. You’ll have a verification framework that prioritizes rapid internal fact-checking over emotional reaction.

Recognition of “Innoculation” Strategies: You’ll understand the concept of Prebunking—proactively educating employees and stakeholders about potential false narratives before they encounter them. This “mental vaccine” reduces the likelihood of internal panic or accidental amplification.

Cross-Functional Governance: Disinformation is not solely an IT or PR problem. You’ll walk away with a template for a Disinformation Response Team that includes:

Legal: For regulatory and litigation risks.

Finance: To monitor market sentiment and volatility.

HR: To manage internal morale and employee-led amplification.

Security/CISO: To detect deepfakes and social engineering.

Measurable Risk Metrics: You’ll be able to track tangible metrics:

Time-to-Detection: How long did it take to find the narrative?

Rumor Longevity: How long did the false claim stay active before being neutralized?

Incident Recurrence: Are the same actors attacking through different channels?

Remember: Disinformation does not need to be believed to be effective; it only needs to create enough doubt to paralyze decision-making.

#####

I want to hear from you

Information integrity is such a huge and vital topic. I want to hear what you think.

Here’s my email: DisinformationWire@yahoo.com. Tell me your stories, share your thoughts, send me tips, questions and ideas. I can’t promise that I’ll be able to respond to every email, but I do read each and every one. If you are not a subscriber, I hope you’ll become one. Paid subscribers get my same-day personal response. I’m very appreciative of your interest in this important subject. Thank you.

Paul Brandus Washington / Feb. 18, 2026